OpenAI CEO Sam Altman says AI will reshape society, acknowledges risks: ‘A little bit scared of this’

The CEO at the rear of the organization that created ChatGPT believes artificial intelligence engineering will reshape modern society as we know it. He thinks it comes with authentic hazards, but can also be “the best technological innovation humanity has nonetheless formulated” to dramatically make improvements to our lives.

“We have got to be mindful right here,” mentioned Sam Altman, CEO of OpenAI. “I assume people today need to be content that we are a minor little bit afraid of this.”

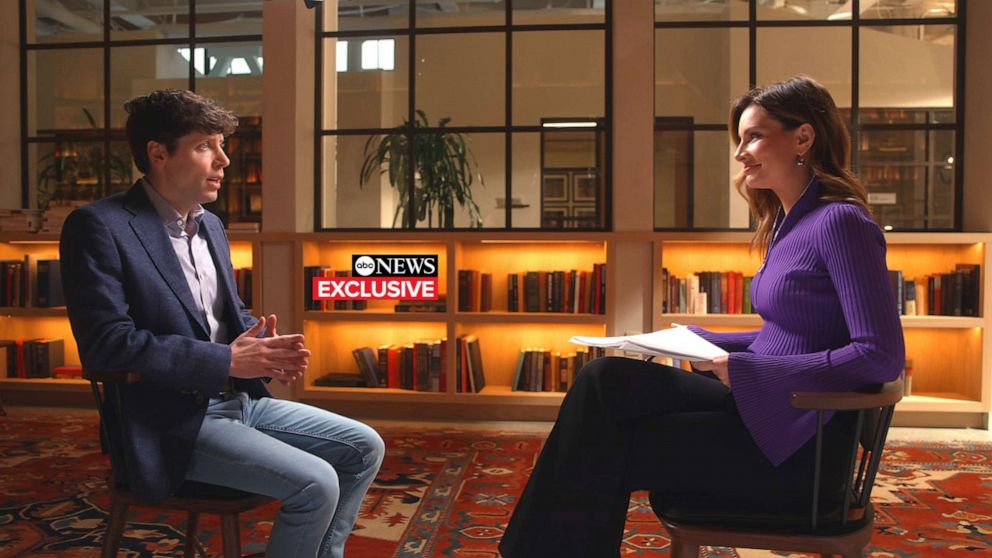

Altman sat down for an distinctive job interview with ABC News’ main organization, technology and economics correspondent Rebecca Jarvis to discuss about the rollout of GPT-4 — the latest iteration of the AI language product.

In his job interview, Altman was emphatic that OpenAI needs both regulators and culture to be as involved as possible with the rollout of ChatGPT — insisting that comments will support prevent the likely destructive implications the technologies could have on humanity. He included that he is in “common call” with govt officials.

ChatGPT is an AI language design, the GPT stands for Generative Pre-trained Transformer.

Released only a couple of months ago, it is now deemed the speediest-expanding client application in heritage. The application hit 100 million month-to-month energetic customers in just a handful of months. In comparison, TikTok took nine months to arrive at that lots of users and Instagram took nearly 3 many years, in accordance to a UBS research.

Check out the special interview with Sam Altman on “Globe Information Tonight with David Muir” at 6:30 p.m. ET on ABC.

Even though “not excellent,” per Altman, GPT-4 scored in the 90th percentile on the Uniform Bar Exam. It also scored a in the vicinity of-great score on the SAT Math exam, and it can now proficiently compose laptop or computer code in most programming languages.

GPT-4 is just just one move toward OpenAI’s goal to at some point establish Artificial Normal Intelligence, which is when AI crosses a powerful threshold which could be described as AI systems that are commonly smarter than individuals.

Although he celebrates the accomplishment of his merchandise, Altman acknowledged the attainable unsafe implementations of AI that preserve him up at night.

OpenAI CEO Sam Altman speaks ABC News’ main business enterprise, engineering & economics correspondent Rebecca Jarvis, Mar. 15, 2023.

ABC Information

“I am particularly anxious that these designs could be used for large-scale disinformation,” Altman stated. “Now that they are having improved at crafting computer code, [they] could be used for offensive cyberattacks.”

A frequent sci-fi concern that Altman does not share: AI styles that really don’t need human beings, that make their very own decisions and plot planet domination.

“It waits for an individual to give it an enter,” Altman said. “This is a device that is quite much in human management.”

On the other hand, he reported he does worry which individuals could be in handle. “There will be other persons who don’t set some of the security boundaries that we put on,” he added. “Society, I assume, has a restricted quantity of time to determine out how to respond to that, how to control that, how to deal with it.”

President Vladimir Putin is quoted telling Russian students on their initially working day of university in 2017 that whoever sales opportunities the AI race would probable “rule the planet.”

“So that is a chilling statement for absolutely sure,” Altman said. “What I hope, instead, is that we successively create extra and far more highly effective techniques that we can all use in various strategies that combine it into our day-to-day life, into the overall economy, and develop into an amplifier of human will.”

Issues about misinformation

In accordance to OpenAI, GPT-4 has enormous enhancements from the earlier iteration, like the potential to recognize images as input. Demos show GTP-4 describing what is in someone’s fridge, solving puzzles, and even articulating the this means driving an internet meme.

This function is currently only obtainable to a compact established of people, like a group of visually impaired end users who are aspect of its beta testing.

But a consistent concern with AI language styles like ChatGPT, according to Altman, is misinformation: The plan can give consumers factually inaccurate facts.

OpenAI CEO Sam Altman speaks with ABC Information, Mar. 15, 2023.

ABC News

“The point that I attempt to caution people today the most is what we call the ‘hallucinations issue,'” Altman reported. “The product will confidently point out issues as if they ended up details that are solely created up.”

The design has this difficulty, in element, simply because it takes advantage of deductive reasoning rather than memorization, in accordance to OpenAI.

“One particular of the greatest dissimilarities that we observed from GPT-3.5 to GPT-4 was this emergent capability to cause much better,” Mira Murati, OpenAI’s Chief Know-how Officer, advised ABC Information.

“The goal is to forecast the subsequent term – and with that, we’re seeing that there is this comprehending of language,” Murati claimed. “We want these styles to see and comprehend the environment extra like we do.”

“The suitable way to believe of the types that we develop is a reasoning engine, not a simple fact database,” Altman mentioned. “They can also act as a fact databases, but that is not genuinely what is actually exclusive about them – what we want them to do is one thing nearer to the ability to purpose, not to memorize.”

Altman and his group hope “the design will turn into this reasoning motor more than time,” he reported, at some point staying equipped to use the world-wide-web and its individual deductive reasoning to separate truth from fiction. GPT-4 is 40{515baef3fee8ea94d67a98a2b336e0215adf67d225b0e21a4f5c9b13e8fbd502} much more likely to produce precise information than its preceding version, in accordance to OpenAI. Still, Altman stated relying on the system as a main source of correct details “is something you must not use it for,” and encourages end users to double-check the program’s outcomes.

Precautions from poor actors

The sort of information ChatGPT and other AI language designs include has also been a stage of issue. For instance, no matter if or not ChatGPT could inform a user how to make a bomb. The remedy is no, for each Altman, for the reason that of the security actions coded into ChatGPT.

“A matter that I do fret about is … we are not heading to be the only creator of this technological innovation,” Altman stated. “There will be other folks who really don’t put some of the protection limitations that we place on it.”

There are a couple remedies and safeguards to all of these opportunity dangers with AI, for every Altman. One particular of them: Enable society toy with ChatGPT even though the stakes are minimal, and discover from how persons use it.

Correct now, ChatGPT is available to the general public primarily since “we’re collecting a large amount of comments,” according to Murati.

As the community proceeds to check OpenAI’s purposes, Murati suggests it gets to be easier to establish in which safeguards are needed.

“What are folks working with them for, but also what are the concerns with it, what are the downfalls, and currently being ready to step in [and] make enhancements to the technology,” says Murati. Altman states it can be vital that the community receives to interact with each variation of ChatGPT.

“If we just developed this in key — in our little lab right here — and made GPT-7 and then dropped it on the environment all at the moment … That, I consider, is a circumstance with a great deal far more downside,” Altman explained. “People today need time to update, to react, to get made use of to this technologies [and] to fully grasp the place the downsides are and what the mitigations can be.”

About unlawful or morally objectionable information, Altman explained they have a crew of policymakers at OpenAI who come to a decision what information and facts goes into ChatGPT, and what ChatGPT is authorized to share with buyers.

“[We’re] speaking to a variety of plan and safety gurus, receiving audits of the procedure to check out to handle these troubles and put something out that we assume is safe and sound and very good,” Altman included. “And all over again, we will never get it fantastic the initial time, but it’s so important to understand the lessons and locate the edges whilst the stakes are relatively very low.”

Will AI change work opportunities?

Amid the considerations of the harmful abilities of this technology is the substitute of work opportunities. Altman says this will probable swap some jobs in the around future, and worries how quickly that could materialize.

“I believe in excess of a couple of generations, humanity has demonstrated that it can adapt incredibly to significant technological shifts,” Altman mentioned. “But if this occurs in a solitary-digit variety of several years, some of these shifts … That is the element I fret about the most.”

But he encourages people today to search at ChatGPT as far more of a device, not as a replacement. He included that “human creativity is limitless, and we discover new positions. We find new matters to do.”

OpenAI CEO Sam Altman speaks with ABC Information, Mar. 15, 2023.

ABC Information

The techniques ChatGPT can be utilized as resources for humanity outweigh the challenges, according to Altman.

“We can all have an unbelievable educator in our pocket that is customized for us, that assists us understand,” Altman said. “We can have health care tips for all people that is beyond what we can get today.”

ChatGPT as ‘co-pilot’

In education and learning, ChatGPT has become controversial, as some college students have utilized it to cheat on assignments. Educators are torn on whether or not this could be employed as an extension of themselves, or if it deters students’ drive to study for themselves.

“Training is likely to have to modify, but it’s happened a lot of other periods with technologies,” stated Altman, adding that students will be equipped to have a sort of teacher that goes further than the classroom. “One of the ones that I’m most thrilled about is the skill to supply specific studying — great person understanding for each college student.”

In any discipline, Altman and his staff want users to consider of ChatGPT as a “co-pilot,” someone who could enable you compose intensive laptop or computer code or trouble solve.

“We can have that for just about every job, and we can have a substantially greater high quality of lifetime, like typical of living,” Altman explained. “But we can also have new things we are not able to even imagine right now — so which is the promise.”